Multi-tenancy no longer limited to MSP arena

In the Managed Service Provider (MSP) arena, multi-tenancy is becoming increasingly popular. More and more, MSP's are starting to use converged infrastructures to support multiple customers on one integrated platform. This trend is supported by various suppliers of software for infrastructure management, data management and security. It is obvious that multi-tenancy addresses the specific challenges of the MSP industry. But it also provides excellent opportunities for the enterprise market.

Big multinational companies traditionally use many different solutions to support and manage their infrastructure. They may for example use solution "A" for back-up and recovery in one country and solution "B" in another region. In addition, solution "C" may be used at one specific department. This has led to a proliferation of many products for the same tasks. Until now this has limited companies in getting a global view of what is happening in their IT. Global insight, however, is becoming increasingly important in view of global competition and the need to be compliant. Limited insight can be solved by deploying a multi-tenancy solution. These solutions support a company in two specific ways:

1. A true multi-tenancy solution will provide one single platform for, for example, global data- and information management or security. It offers the CIO a complete view of the IT environment and enables the IT department to focus on services instead of managing hardware and software.

2. At the same time, the solution provides a department, a facility or office all the features of a purpose-made product, giving IT managers at the specific location the idea they work with their own tailor-made platform.

Directory Services

One of the main features of a multi-tenancy management solution is the ability to integrate with Directory Services. This enhances security and usability significantly. A system manager can establish rights granularly for each user and for example determine which user may restore a specific database and who has access to a production system. This is especially important as IT systems are becoming increasingly complex and compliancy is an important issue for any company. Tight integration with Active Directory also prevents the proliferation of generic admin accounts, used by various system managers. This is a well-known practice in IT, but it makes it impossible to track and audit changes. By integrating the management solution with Directory Services System managers always log in with their own account and as a result can always be tracked and traced.

Charge back

A second important feature of a multi-tenant solution and one that particularly addresses the current needs of companies is charge back capabilities. Whereas MSPs need this capability to charge their individual clients, an enterprise may use this feature to give internal clients insight in their use of IT or charge departments internally. It also enables companies to offer departments more freedom of choice with regard to their IT investments. They know what they spend and can decide themselves in which solutions they want to invest to reach their business goals.

Control

The third feature is focused on control. As pointed out earlier, multi-tenancy provides a single platform that can be adapted to the use of various locations or departments as it is their own separate solution. This also means that the solution must provide means for a granular set-up, enabling for example to deliver specific views of departments' IT infrastructure. This does not only enhance the quality of systems management, but will also contribute to the commitment of the IT staff. When staff is able to focus on their own dedicated systems, instead of having to go through tens or hundreds of servers to find their own systems, they will definitely make less mistakes and have the feeling they are more in control.

The IT world is changing from a focus on physical infrastructure to virtualised but very real services. MSPs lead the way by deploying new, converged infrastructures that fully profit from virtualistion and multi-tenancy solutions. They no longer deliver hardware or software, but only services. Also, in the enterprise world, IT departments are looking for ways to make this shift to a services-oriented organisation. They can also profit form the multi-tenancy approach that is already widely used in the MSP arena. It will offer them global insight in IT, with sufficient room for a local touch. In the end, it will enable them to be a true services organisation.

CIOs must consolidate to drive data centre transformation

Today's CIOs have to deal with the potential challenges of bring your own device (BYOD), mobile applications, software-defined networks (SDN), cloud sprawl, and usability demands, all on a static or shrinking budget.

As such, organisations are increasingly pursuing enterprise-wide virtualisation and consolidation as a means of improving the cost efficiency, manageability, security, resiliency and flexibility of the business. This is leading to a fundamental transformation in the modern data centre.

As such, organisations are increasingly pursuing enterprise-wide virtualisation and consolidation as a means of improving the cost efficiency, manageability, security, resiliency and flexibility of the business. This is leading to a fundamental transformation in the modern data centre.

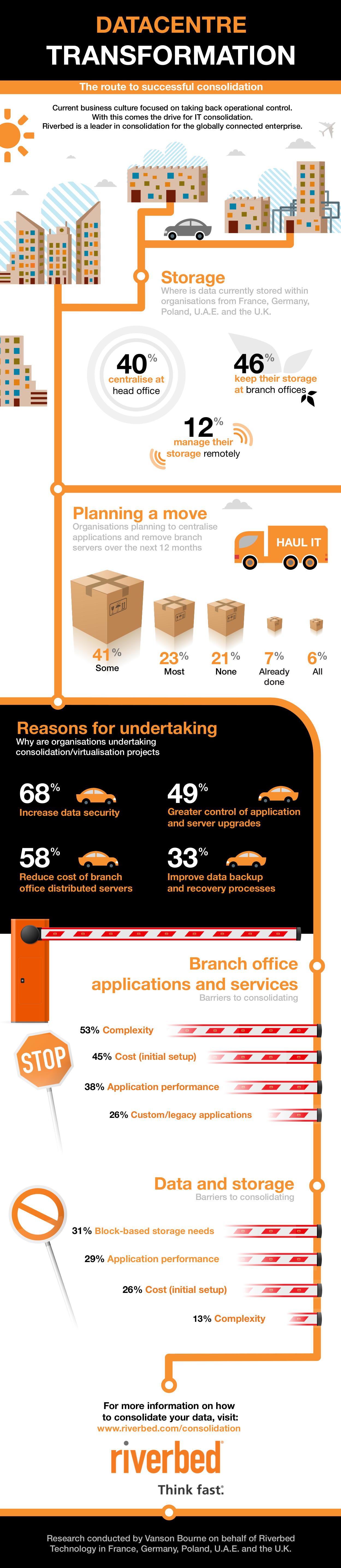

In fact, according to research conducted by Vanson Bourne on behalf of the application performance company, Riverbed Technology, 70% of IT decision makers across Europe and the Middle East are planning consolidation projects during 2013.

Virtualisation is now being extended to production and line-of-business applications. With this broader adoption, customers have begun to look for similar cost savings from broader consolidation efforts. They’re relocating servers from branch offices to consolidated data centres and even consolidating entire data centres and operating IT out of fewer locations, as well as moving certain workloads and data to the cloud.

The study questioned 400 CIOs across France, Germany, the United Arab Emirates, Poland and the United Kingdom. It revealed several major drivers and barriers that CIOs are considering when looking at consolidation.

Drivers

By consolidating and transforming the architecture of underutilised and distributed assets, a business can reduce infrastructure, administration, and power and cooling costs. At the same time it can reduce the volume of hardware to manage, and more easily apply automation policies, improve security, and streamline data protection procedures for disaster recovery and business continuity.

The study found that 68% of those planning a consolidation project report data security as the key driver for their programmes. Reducing the cost of managing distributed servers at the branch office layer (58%) and greater control of application and server upgrades (49%) were also reasons to consolidate. Although these drivers varied in importance across regions, these were all consistently high factors for every business, regardless of location or industry.

Organisations consolidate infrastructure and adopt virtualised deployment paradigms, provision new services and locations, and add scale to existing applications, all with minimal cost overhead and administration.

However, many still harbour concerns about the negative impact that projects like these may have on the business.

Concerns

While transforming the data centre through consolidation holds the potential for improving cost efficiency and mitigating risk, the reality is that IT needs to play a part in supporting productivity and revenue growth. This generally involves supporting users outside the data centre, at the far edges of the network and so post-consolidation performance is a major concern.

The research showed that, of those questioned, over half cited complexity as the biggest obstacle to consolidation efforts. The cost of initial set-up, as well as application performance over the WAN, was also reported as concerns by 45% and 38% of the respondents, respectively.

IT often questions the feasibility of sustaining performance after consolidation, but with the right support and solutions, all these issues can be overcome.

Advice

Consolidation and data centre transformation can fundamentally change a business’s application delivery environment, making it more reliant than ever on the network. As the network becomes critical for centralised applications, changes that used to affect a single location can now impact an entire region.

Another key consideration is that, once applications have been centralised within the data centre and started delivering proven cost savings and efficiencies, there is the potential for performance blind spots amidst the layers of virtualisation in the data centre.

The cost of change is sometimes high, but when an organisation considers the overall savings that can be achieved, the true value of consolidation can be accurately ascertained. This requires an assessment of other recent IT investments and the cost of migrating to cloud services, for instance.

Regardless of whether a business is seeking to improve performance after consolidation or at the start of such a project, performance and simplicity need to be at the heart of the network infrastructure.

In particular, boosting sluggish business applications and slow websites can be dealt with through a combination of WAN optimisation, QoS, and web content optimisation (WCO). Combined, these technologies can help ensure that the increased distance between workers and the data and applications they use don’t slow things down.

Of course, not every application should be moved out of branch offices, but the risks and complications of a distributed application infrastructure can be mitigated. Those few applications that do remain can be virtualised directly on a WAN optimisation appliance and managed remotely with industry standard tools.

Even when reducing the number of data centres, a company’s services and applications will be spread across several locations. Virtual application delivery controllers (ADCs) make it possible to accelerate user and replication traffic between data centres as well as ensure high availability of critical services.

To effectively manage such modernized data centres requires monitoring of the traffic between virtual machines to keep tabs on the performance of virtualised services. With such monitoring tools in place, CIOs can rest assured that their investment is paying dividends and that any potential problems are fixed before they adversely impact the business.

Conclusion

The tools exist to enable a business to successfully consolidate and transform its entire data centre infrastructure. With the right partner, an organisation can easily ensure that network performance is maintained during the consolidation and transformation process. As such, enterprises can successfully and intelligently implement strategic initiatives such as virtualisation, consolidation, cloud computing and disaster recovery, without fear of compromising performance.

The benefits of a well-planned and executed data centre transformation approach can extend beyond merely cost savings, with companies improving the way they mitigate risk and grow. While many organisations have achieved some level of consolidation, beginning with server virtualisation, enterprise-wide consolidation efforts require overcoming greater complexity, latency, and traditional IT organisational silos.

The Vanson Bourne study highlights how CIOs are recognising the importance of centralising technology and data to gain these efficiencies. But some must overcome barriers before they can consolidate their applications or remove branch office servers.

By ensuring that performance challenges are identified, addressed, and managed, organisations can realise greater flexibility in where they locate IT resources. Doing so can mean greater economies of scale, control, and security.

Top six priorities for contact centres in 2013

If contact centres aren't preparing to embrace these six key trends, they have already fallen behind.

Too many contact centres have relied on tried-and-trusted technologies for too long. But emerging trends and technologies that have been on the cards for a while are becoming forces contact centres can't afford to ignore any longer.

Leave your complexity worries behind

There’s no enterprise complexity with cloud computing

“OK, admittedly there is complexity in the cloud. Any large-scale computing and communications infrastructure platform has complexity coming out of its ears,” says Rob Lith, Director of Connection Telecom. “But the point is that a good cloud-based solutions provider shields customers from it.”

The hidden pitfalls of unmanaged software: How unused applications are costing companies millions

When it comes to managing software licenses and assets, most companies focus on compliance – rather than cost-cutting. Organisations are over-licensing, buying more and more software in order to err on the side of caution. This can be an extremely costly mistake.

On the face of it, very few organisations have software asset management strategies in place, exposing the company to considerable financial risk. As long as software is bought and licensed legally, it’s forgotten. Very few organisations stop to detect unused software or shelfware (that is never deployed to begin with) across the myriad of systems, locations, PCs and servers- never mind reclaiming or reusing them.

Bundled deals, license options and virtualisation have all contributed to the complexity of software asset management. Organisations are struggling to reconcile the software they have actually deployed with the software they have licensed. Very few seem to know how much money and waste is being tied up in their software and how much they could save by efficiently managing their software licenses. Add to that the fact that software vendors are becoming increasingly more vigilant, clamping down through regular vendor audits which result in heavy fines, substantial back-charges and the threat of legal action. (Virtually all agreements with software vendors stipulate that they will have the right to audit at least annually.)

Fearing being caught with unlicensed products, many organisations respond by buying even more software, ignoring the very real danger that it will never be deployed, used or add value. This results in unnecessary costs as companies are still paying maintenance fees for software they aren’t using or purchasing additional licenses rather reallocating existing ones. Analysis conducted on a number of local organisations showed that at least 20-40% of licenses deployed are not being used – and definitely not reclaimed. In USA alone, this represents $12.3 billion in preventable and ongoing costs, with a typical enterprise with 10 000 users using $4.1 million worth of unused software on PCS, costing $1.1 million annually on ongoing maintenance.

Disorganisation is another concern, particularly not having a handle on what software is deployed in your organisation and how many licenses of that particular piece of software are deployed. Typically organisations will purchase a number of licenses, known as their license entitlement. However, when more than the entitlement is deployed, they have to pay additional for the additional usage. This represents not only a financial risk but also a reputational risk - for all intents and purposes, this is construed as license theft if not disclosed and procured.

CIOs should be asking themselves, on a regular basis: What do we own? What are we using? And of course, What do we really need?

By deploying license metering and tools implemented like 1E’s AppClarity that provides the ability to reclaim unused applications both in an automated fashion, as well as a user centric approach, companies can curb their unnecessary costs dramatically.

Software asset management tools should also be deployed and maintained to ensure that license entitlement and deployment remain in synch. The software efficiency report of 2011, showed that 52% of enterprises used spreadsheets to record software licenses, 12% using paper-based filing systems and 12% - use nothing whatsoever. Keeping track of licenses is the key to remaining cost efficient.

In the end, there is no excuse for not having a software asset management strategy in place – there is technology that will do it for you. This is a quick and effective way of eliminating software waste in the company, while still safeguarding the organisation against a vendor audit.

Two choices to leap the information-quality-in-the-cloud hurdle

The fact that more business information is moving into the cloud or that the cloud is being used to store an increasing share of business data is not news. Nor is it news that one of the biggest challenges is ensuring the good quality of that information in line with enterprise norms.

One of the challenges that still remains, however, is ensuring good quality information when it resides in off-premise, cloud-based systems.

The hurdle has always been that organisations storing information in the cloud have been subject to service level agreements (SLAs) with their service providers that focus on access availability, speed of delivery, data recovery and security, but never on maintenance or watchful and responsible care in accordance with enterprise processes, procedures and practices. The result is that the information can never be fully trusted.

Integration and quality concerns, which underscore the difference between trusted or not, require both cloud and on-premise information and content be subject to the same standards. They must endure the same rigours, the same exchange protocols, integration and quality processes, domain-respective business rules and so on, to ensure that all information is uniformly managed in a standardised manner.

That may be achieved by mapping data between on-premise systems and those in the cloud and, if so, must be done through a standard, common set of logic and rules that are implemented to govern the information and content. That architecture will result in a compromise in processing and storage performance which is inevitable in any type of exchange and should not constrain the design to the extent that management of unstructured cloud information and content is largely ignored.

Another approach is to exchange only the information or content that is required by applications and users for queries or reports. It keeps network traffic and storage requirements to a minimum. Virtualisation and federation technologies can be exploited because they do not physically move all the information or content from their place of origin but rather they reference them and only the requisite bits are actually copied. That offers another enormous advantage: the information and content are left intact at source and managed by the local standards and security.

Failure to resolve this issue once a cloud-based architecture is adopted will exacerbate storage and duplication issues that will inflame lack of trust in business systems. Experience shows that when that occurs the speed, flexibility, and accuracy of information supply to business users breaks down with the result that organisations become inflexible, lethargic in the face of rapid market shifts, and spiral into margin depreciation.

More companies face the dilemma of which solution they will turn to. In an October 2011 report, IDC VP for storage systems, Richard Villars, stated that companies worldwide spent $3,3 billion on public cloud-based storage in 2010. He projected the compound annual growth rate at 28,9% which put the global spend at $11,7 billion – last year. By comparison, the total spend in 2010 for on-premise storage was around $30 billion, which puts IDC's forecast for cloud-based storage by 2015 ahead at more than $37 billion. Interestingly, IDC's report projects service providers will increase their spend from $3,8 billion in 2010 to $10,9 billion by 2015.

So, once the cloud is incorporated into enterprise information strategies, regardless of which option companies choose, many more are facing the challenge, as there will always exist a growing need to expand on existing on-premise information management processes and capacity to accommodate external cloud information and content.

In the Global Village, does geography still matter?

Saying that we’re living in a global village or a virtual world is stating the obvious. Video calling, high-speed Internet, social media and teleconferencing have given us the ability to instantly connect, regardless of our location. Nowhere is this more obviously demonstrated than in the realm of call centres where customers in countries such as the UK and US regularly interact with support services in the Philippines, India and of course, South Africa.

For the most part, this system has worked well. Research has shown that most people don’t care where their call centres are based – as long as they can deliver adequate support – and companies have cut their labour and real estate costs dramatically. As Martin Conboy, editor of the Sauce (an outsourcing news service in Australia) puts it: “If companies can access talented and less expensive labor in somewhere like the Philippines, why would a business pay more for the same thing in their own country?”

But lately there seems to be a backlash. In his state of the Union address earlier this year, US President Barack Obama urged American businesses to bring jobs to back to the US and eliminated tax breaks for companies that outsource. Last year, Spanish-owned bank Santander withdrew their centres from India and returned them to the UK citing “customer frustration with geographically and culturally distant call centre operatives”. They weren’t the only ones. BT, Powergen and New Call Telecom followed suit, as did several American credit card companies.

Ostentatiously, the groups have cited the inability to relate to customers as the reason for the withdrawal. In fact, a rather bold article by the Washington Post has claimed that India is rapidly losing their claim as the call centre capital of the world to the Philippines, because Philippine culture more closely represents that of the United States. But does the ability to discuss soap operas or the weather really impact your customer service experience? Geography, in my opinion, was not the problem (albeit a convenient excuse) in the examples above. Dig a little deeper and I expect you’ll find that less time and money was spent on training and managing staff than prudent.

Virtualisation and Call centres

Cloud based technology has many benefits, such as the economies of scale, mitigation of hardware failure and access to accurate information on demand. It also allows companies the ability to set up a call centre anywhere in the world, across different time zones, which may result in improved continuity of business.

Well-trained call centre agents are continuously educated and trained on new and existing products and services, and are able to provide detailed information that enables them to troubleshoot a wide range of inquiries. They are also equipped to handle queries particular to the region they are serving, such as pricing queries or rate plans, despite being limited to that geographic area.

If queries are resolved promptly and efficiently, does the customer really care where the call centre is based? I doubt it.

At the end of the day, customers want the same thing from their call centres: clear lines of communication, good customer service and after-sales care if needed. Whether the individual speaks in an accent or not is irrelevant – as long as they can be easily and clearly understood.

The reality is that offshore hubs such as India, the Philippines – and South Africa – are not going out of business any time soon, as long as they can offer cheaper and more efficient call centres and refuse to compromise on quality. The BPO boom in India can be attributed to cheap labor costs and the country’s pool of skilled, English-speaking professionals – both factors that can be found in abundance in South Africa. And considering, from an economic viewpoint, that the call centres outsourced to India alone has created 800 000 jobs, we should make a point of competing for a spot in that market.

We’re not limited by our location anymore and customers realise that. They care about your level of service and the efficiency of your staff. If you can manage that, you will succeed – no matter where your call centre is located.

Should your company move to the cloud?

Cloud-based communications has clear benefits, but whether those benefits will accrue to your organisation depends on a number of factors. If your enterprise answers to any of the questions in the checklist below, you may be in the market for a hosted PBX.

Are you likely to expand?

Entities with significant potential for branch-like expansion – such as a medium-to-large-footprint bank, a retail chain or a service station franchise – can derive the most value from cloud telephony. Every time a new branch of franchisee comes on-line, the expense of an on-site PBX has real potential to sink the business case. With cloud, the franchisor or corporate head office can offer hosted telephony into the bargain, significantly lowering the entry barrier for local businesses. In addition, this model of telephony is much easier to roll out and manage for uptime, and the "on-net" savings possible with cloud further lift the business case.*

Are your employees mobile?

Is a significant portion of your workforce mobile, either by virtue of being constantly on the road or remotely stationed? A cloud communications configuration can provide satellite working units with full enterprise collaboration and unified communications at low cost. Even user administration tasks can easily be done via Web portal, from any operating platform (device).

Do you need flexible communications capacity?

Cloud computing operates on a "virtualised" design principle, where physical separations between resources like disk drives or servers are irrelevant – all the computing power represented by these resources are pooled together in an amorphous "cloud" of divisible capacity. In such a scenario, you're not bound by the limitations or excess capacity of discrete servers; you can simply procure just enough virtual capacity for your use in any given month (or shorter time increments). This makes sense for campaign call centres or varying seasonal demands on your business communications.

Is your power supply unpredictable?

Cloud data centres are amply provided with protection against power surges and cuts. The alternative is unappealing – a high-end UPS (uninterruptible power supply) that only keeps you going for so long, or costly on-site power generation.

Does your business rely on collaboration?

You may want to employ cloud techniques to give access to at least some applications, such as hosted enterprise resource planning (ERP) for managing suppliers, or hosted collaboration applications for shared workflow. It is also highly advisable to have hosted communications to cheaply bring partners "on-net" if there is a business relationship requiring constant communication. This applies, for instance, to retailers getting purchasing authorisation from a customer's credit card institution.

Do you need to standardise?

Cloud also makes sense where you want group businesses to standardise on certain applications, such as financial and ERP.

These scenarios are by no means exhaustive, and new usage cases are constantly emerging. Chances are that you will discover a few of your own if you see benefit in having access to shared or centralised ICT infrastructure with best-in-class business continuity assurance.

* On-net savings can accrue between branches, to head office, and even to business partners if the installation provides for it.

Is your on-demand call centre the real deal?

Insist on “elastic” scalability and responsive provisioning at low cost

One of the most topical issues in call centres currently is the “on-demand call centre”. It implies being able to grow and shrink your agent pool in line with the ebb and flow of demand, without being concerned with details like being under- or over-provisioned with resources (bandwidth and scale of application support).

In short resourcing mustn’t be a worry and it must happen seamlessly. Required changes must happen at the speed of business, and you most certainly don’t want to be charged for excess capacity or ever be left under-resourced.

The efficiency of this model promises significant savings. Nevertheless, buyers should carefully scrutinise the offer on the table: does it give you all the possible benefits of an on-demand call centre, and at what cost and compromise?

Identifying true ODCCs

The example of TAKEALOT.co.za, an online retailer, serves to illustrate what a true on-demand call centre entails:

‘Elasticity’

TAKEALOT’s promise to customers is to deliver any orders placed before 1pm on the same day. To handle the spike in demand, the company has to sharply increase its normal capacity before 1pm and back down again shortly afterwards. This must happen quickly and seamlessly – an infrastructural delivery capability best described as “elastic”.

Several things work in concert to make elasticity possible. The seamlessness of the variation is firstly the result of delivering bandwidth and application resources as a managed service – that is, in the background.

Virtualisation of the computing infrastructure is another contributing factor. As an architectural design feature that separates the customer’s requirement for capacity from physical infrastructural resources, virtualisation makes it easier to deliver just enough capacity.

By contrast, non-virtualised call centres are by their very nature over-provisioned to cater for times of peak performance. They are therefore routinely underutilised, as their spare capacity cannot be divorced from the underlying infrastructure to be put to better use elsewhere. All this happens at immense upfront capital expenditure and on-going operations and maintenance cost over multiple years.

Alacrity

TAKEALOT’s other non-negotiable requirement was speed of provisioning, as it needed to be up and running in six weeks.

The way to achieve this is through a standards-based hosted call centre platform that can easily and quickly be made to slot into the customer’s call environment. With a standards-based platform, all the customer needs is an Internet Protocol-based broadband connection to be up and running in no time.

Non-standard platforms, on the other hand, cannot claim to be on-demand, as they require an immense upfront integration effort, leaving the provider powerless to deliver much this side of six months.

Frugality

ODCCs are also typically not as expensive as their proprietary counterparts. Such systems are notorious for requiring that customers buy the entire spectrum of functionality of the platform, including some it will never use.

By contrast, a true ODCC based on an open-source software-based system offers core functionality at per-extension pricing that is close to a normal telephony service. At a reasonable additional cost, extras like dialling campaigns, agent queues and reporting can be included.

In the example of TAKEALOT, for instance, call centre management was provided with reports of peak-time call distribution across agents to enable it to ramp up resources when necessary. Caller ID functionality revealed missed-call numbers, allowing the service-obsessed company to phone back customers it missed.

However, should a call centre not have use for this functionality, it need not procure it.

Built for purpose

In short, an ODCC can rapidly meet the core functionality and capacity needs of the average call centre, by virtue of being hosted, virtualised and standards-based. This can greatly shorten the time to market for new call centres and decrease their cost, provided the buyer carefully scrutinises the contract.

Virtualisation gives decision makers choice, control

Cloud virtualisation or the environment through which all areas of the business can be controlled off-site or ‘virtually’ presents decision makers with an interesting scenario: today they have more choice about how to manage and sustain the business using strategically-placed ICT infrastructure without breaking the bank.

Business operators have to sustain the highest levels of activity, with minimal impact on resources and this has helped to spur interest in virtualisation.

There are many areas where virtualisation impacts the business. We know that a range of hardware, software and other network infrastructure can be managed this way, used and applied throughout the network without owners having to worry about maintenance and sustainability.

Server virtualisation is a good example. It is based on simulation technology and the creation of more ‘virtual’ servers than there are physical ones.

Administration and practical application of this infrastructure takes place in a non-physical way. There are many products that can form part of the virtual environment including servers, platforms, application, desktop and network.

Being able to focus on business strategy without being distracted or side-tracked by infrastructure management issues such as Total Cost of Ownership and Return on Investment, remains a key advantage in today’s marketplace.

A virtualised environment means more productivity and more time to focus on strategic core areas. This is because virtualisation is based on the centralisation of resources, which eliminates the need to add more software and organise additional resources to run operations. It is a more streamlined approach to business management.

There is less usage of space and of physical infrastructure, flexibility and scalability are also advantages.

Physical resources are spread across the business, to all departments, via virtual networks which improves access and efficiency.

With the rapid advancement of cloud computing and parallels to virtualisation, it is easy to understand why cloud virtualisation and cloud computing are mistakenly referred to as one in the same. The reality is that these are two very different technologies and have different dynamics – however both are focused on helping the decision maker gain more by using less.

But with cloud computing, the data resource and process resides in the cloud - based on a Software as a Service model.

The extent to which these technologies are acquired and implemented in business may vary and depends on a variety of factors. This is up to decision makers to understand and apply policy.

What is also true, however, is that these technologies must be part of any credible operational strategy – this is critical for survival.

- Start

- Prev

- 1

- 2

- Next

- End